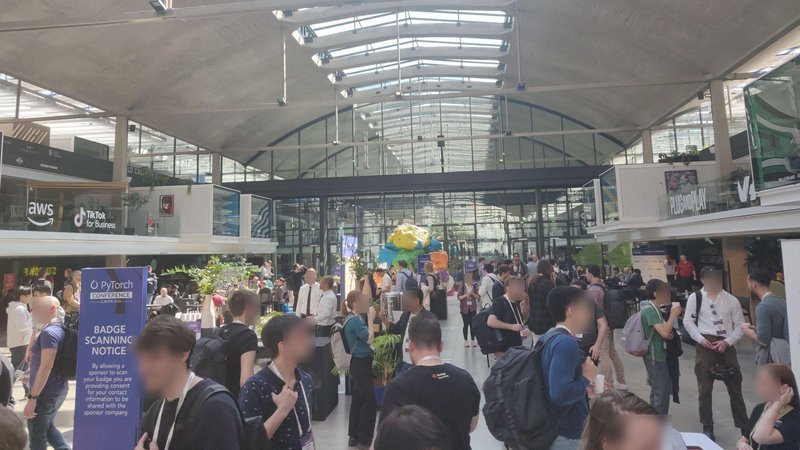

The Paris AI team attended the inaugural PyTorch Conference Europe, held April 7-8 at Station F in Paris. The Paris AI team is a team of 5 people, ironically split between Paris and NYC (but historically based in Paris). They are focused on innovative AI topics, such as Computer Vision capabilities in Explore, and more recently behind-the-scenes work on MIRA.

Context

PyTorchCon Europe 2026, hosted at Station F by the Linux Foundation, was a developer and researcher-focused in-person event with roughly 70 talks over two packed days, drawing major actors from Meta, NVIDIA, Google, HuggingFace, Qualcomm, IBM, Red Hat, ARM, and others. The agenda was dense, with keynotes, technical sessions, and a community expo running throughout. A few hundred people attended this first European edition.

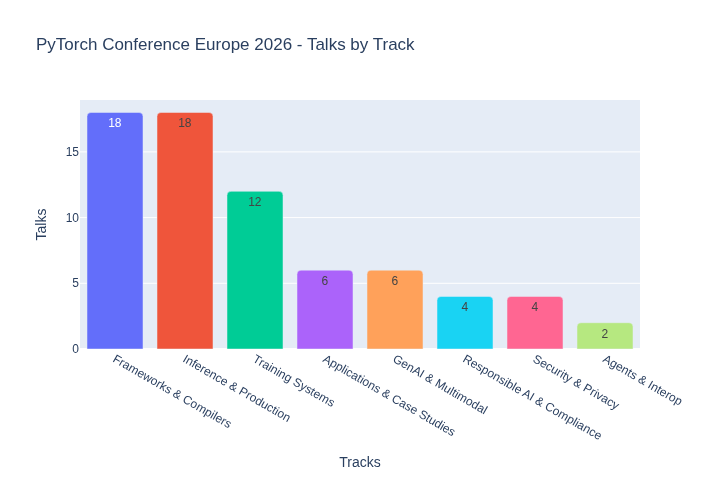

One look at the track breakdown says everything about where the ecosystem’s energy is right now:

Inference and frameworks dominate, together accounting for more than half the scheduled sessions. Training still gets airtime, but the centre of gravity has clearly shifted: the hard problem is no longer building models, it’s running them efficiently at scale. Everything else flows from that.

The inference stack is getting serious

The single clearest signal from the conference is that production LLM inference has matured into a proper systems engineering discipline, with the complexity and tooling diversity to match.

Serving at scale. vLLM has become the de facto open-source inference engine, now part of the PyTorch Foundation, and its ecosystem is expanding fast. KV cache is the central resource to manage, and the community has built sophisticated approaches around it: KV offloading, prefix-cache-aware routing, hierarchical caching, and disaggregated serving (prefill and decode on separate nodes, since they have fundamentally different resource profiles: prefill is compute-bound, decode is memory-bound).

llm-d went further on the orchestration side: it layers a Kubernetes-native inference scheduler on top of vLLM, with cache-aware routing, LoRA1-aware load balancing, and prefill/decode disaggregation. Some talks covered this directly. The key insight: routing requests based on raw GPU utilisation is a naive strategy. Smarter approaches exploit KV cache locality; routing a request to the node that already holds its prefix cache cuts recomputation and latency. GPU load is a proxy, cache state is the actual signal.

torch.compile featured heavily across sessions. It’s no longer an experimental feature; it’s a core part of the vLLM inference pipeline, with the PyTorch compiler team actively upstreaming inference-specific features.

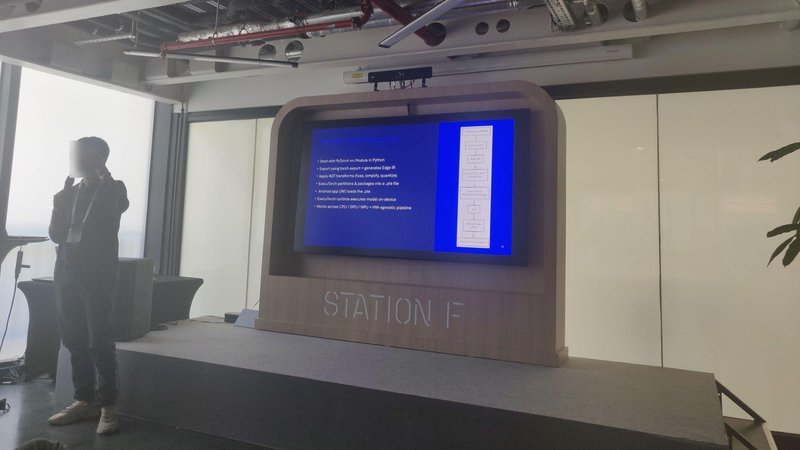

ExecuTorch joined PyTorch Core on day one of the conference. This is a governance move as much as a technical one: it gives hardware vendors and platform partners a neutral, vendor-agnostic foundation to build on for on-device inference, with clear intellectual property, branding, and a multi-stakeholder roadmap. In practice, it signals that the foundation takes edge inference as seriously as server-side inference.

Quantization has moved well past FP16/BF16. The dominant formats now are INT8, MXFP8, and NVFP4, the latter getting dedicated hardware acceleration on NVIDIA Blackwell. Multiple sessions covered various quantization approaches, and AMD presented on near-lossless MXFP4 compression.

The kernel layer is getting crowded (in a good way)

One of the more interesting subplots of the conference was the proliferation of GPU kernel authoring tools, all solving the same problem from different angles: writing high-performance GPU kernels is hard, error-prone, and hardware-specific. The field is trying to fix that.

Triton (OpenAI) remains the Schelling point: a tile-based Python DSL that compiles down to hardware-specific code and has become the common compilation target for higher-level abstractions.

Helion (Meta) sits one level above Triton: a Python-embedded DSL that compiles to Triton, with an autotuning engine that searches thousands of Triton configurations automatically. An attention kernel in Helion is around 30 lines vs 120 in Triton. The trade-off is that the initial autotuning pass takes around 10 minutes, after which optimal configurations are baked in.

cuTile / CuTe (NVIDIA) is NVIDIA’s answer to the ergonomics problem. CuTe is the lower-level C++ abstraction for tile-based tensor operations; cuTile (released in CUDA 13.1) brings the same tile-based model to Python, letting developers write sequential array-centric code and leaving thread and memory mapping to the compiler. Both are widely read as a strategic move to keep kernel development inside the CUDA ecosystem as Triton’s adoption grows.

HuggingFace Kernels Hub rounds this out at the distribution layer: a cloud repository of pre-compiled GPU kernels (FlashAttention, quantization ops, etc.), now a first-class repo type on the Hub alongside Models and Datasets. The pain it solves is real: compiling FlashAttention correctly across CUDA versions and GPU architectures has always been a fragile, time-consuming process. One line of code to pull a pre-compiled kernel for your specific environment is a genuine quality-of-life improvement. It supports NVIDIA CUDA, AMD ROCm, Apple Metal, and Intel XPU.

The takeaway: the kernel layer is stratifying. Experts write CUDA/Triton/cuTile. Applied ML engineers will increasingly reach for Helion or Kernels Hub. Both layers are maturing simultaneously.

Hardware and software are co-evolving around MoE

NVIDIA’s presence was significant, and one of the more interesting threads was how hardware design is now explicitly accounting for model architecture patterns, particularly Mixture of Experts (MoE).

MoE models like DeepSeek-R1 create unusual communication patterns: expert routing involves all-to-all collective operations rather than the all-reduce patterns dominant in dense model training. The latest NVIDIA racks (like the GB300 NVL72) provide intra-rack bandwidth directly sized for this. Specific sessions directly covered all optimizations on such hardware (compute, communications, etc.). Explicitly inference-oriented hardware is now a thing for NVIDIA (but the topic is not new as some other actors, such as Cerebras or Groq, are already focusing on this). The co-design story, where software teams (vLLM, SGLang) work closely with NVIDIA hardware teams to saturate the new interconnects, was a recurring theme across multiple talks.

Edge is becoming strategic

Edge inference had a visible presence, which felt pretty new compared to the ecosystem (or at least, this was our feeling). Qualcomm was there. ARM had multiple sessions. Google’s day 2 keynote focused on Gemma 4, with a demo running on a phone.

ExecuTorch going into PyTorch Core is part of this: it’s the official story for how PyTorch models get to mobile, AR/VR, and embedded targets. The fact that it’s now a foundation project rather than a Meta-internal project lowers the barrier for Qualcomm, ARM, and others to invest in it as neutral infrastructure.

This connects to a broader trend: as model quality at small parameter counts improves (driven partly by distillation from large frontier models), on-device inference becomes increasingly viable for a wider class of applications. The hardware vendors are clearly anticipating this.

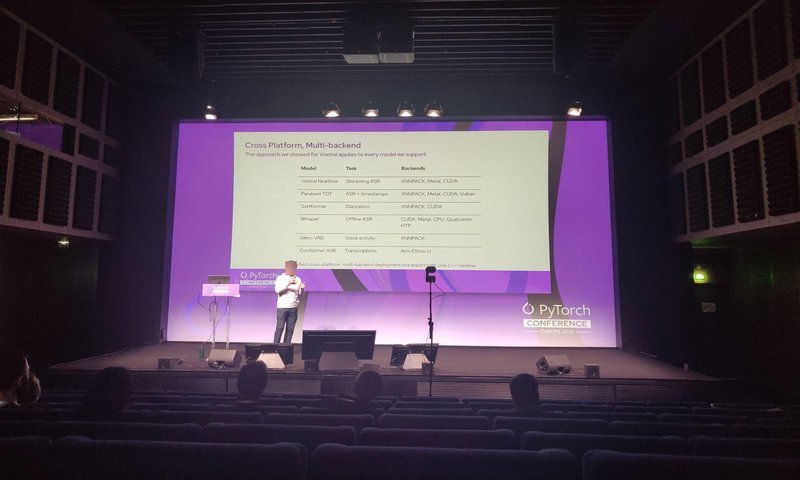

When streaming shapes the model

Mistral gave an interesting talk on the architecture behind Voxtral, their open-weight speech stack. The ASR side (Voxtral Realtime) is trained end-to-end for streaming via a Delayed Streams Modeling framework2, using a causal encoder that processes audio left-to-right rather than the bidirectional attention used by models like Whisper. This makes true real-time transcription possible without chunking hacks, with a configurable latency/accuracy trade-off.

On training and evaluation

Training hasn’t been abandoned. The ecosystem has structured itself considerably over the past two years: TorchTitan (the PyTorch-native large-scale training framework), TorchFT (fault tolerance), and the broader meta-pytorch tooling suite all featured.

Several talks addressed the growing difficulty of LLM evaluation. Traditional benchmarks are losing credibility due to data contamination, overfitting, and a mismatch between static test settings and real-world usage (particularly now that models can call external tools). High benchmark scores no longer imply real capability, and limited transparency around how results are reported makes model comparison harder still. The problem compounds for agentic systems, where multi-step planning and tool interaction make results acutely sensitive to implementation details and hard to reproduce across setups. The emerging consensus points toward treating evaluation as a first-class engineering concern: standardized methods, modular and transparent tooling (Nemo-Evaluator being one concrete example), and metrics that capture reasoning and tool interaction across the full model lifecycle (not just final outputs on a fixed benchmark).

What it means for us

The conference confirmed several directions that are directly relevant to the AI platform we’re building at Meltwater.

The inference stack maturity matters: as production serving becomes more standardized, the operational complexity of running our own model inference decreases. Cache-aware routing and prefill/decode disaggregation are increasingly first-class features, not bespoke engineering.

Improvements on inference and streaming, sometimes baked into the model’s architecture itself, are key for us. It’s part of the user experience to have a responsive AI.

More broadly, the pace of improvement across the stack, from kernel tooling to quantization formats to hardware, continues to accelerate. Capabilities that required significant bespoke engineering 12 months ago are now one pip install away. The implication for us is that we should be raising our ambition on what’s feasible within the product, and investing less in infrastructure and more in the application layer where we have genuine differentiation.

If you’d like to see the different talks by yourself, they will soon be uploaded to the PyTorch YouTube channel.

LoRA: Low-Rank Adaptation of Large Language Models, Hu et al., 2021, https://doi.org/10.48550/arXiv.2106.09685 ↩

Streaming Sequence-to-Sequence Learning with Delayed Streams Modeling, Zeghidour et al., 2025, https://doi.org/10.48550/arXiv.2509.08753 ↩