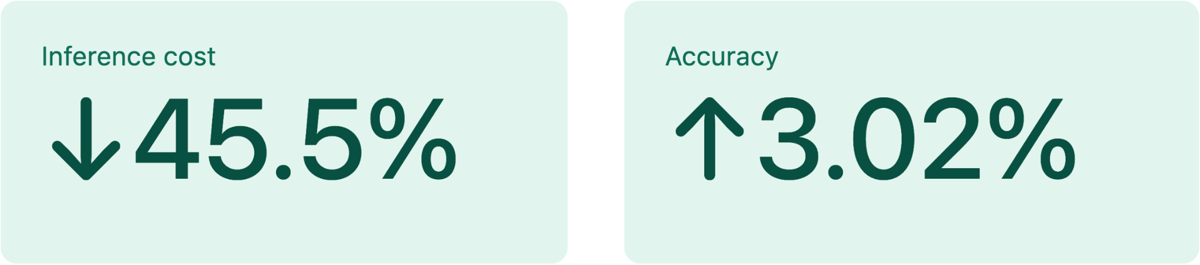

In most technology systems, there is a fundamental trade-off between cost and performance. If you want better accuracy, you typically need larger models, more compute, or more processing time, all of which increase cost. If you want to reduce cost, you usually have to accept lower accuracy, slower insights, or reduced coverage. In our case, we were able to improve both at the same time. We reduced inference costs by 45.5% while also improving accuracy by 3.02%. That combination is unusual, and the path to getting there is worth sharing.

The Problem: Understanding Sentiment at the Entity Level

We process millions of documents across news and social media every day. These documents often mention multiple companies, products, or people, and our customers care about how each of those entities is perceived.

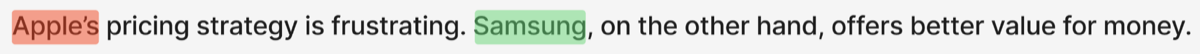

Traditional sentiment analysis assigns a single label to an entire document. But that breaks down quickly in real-world scenarios.

Consider for example:

A document-level model might label this as Neutral. But that loses the real insight:

- Apple -> Negative (pricing criticism)

- Samsung -> Positive (favorable comparison)

Entity-Level Sentiment (ELS) solves this by answering a more precise question:

What is the sentiment toward each entity mentioned in a document?

This is significantly more useful, but also much more computationally expensive.

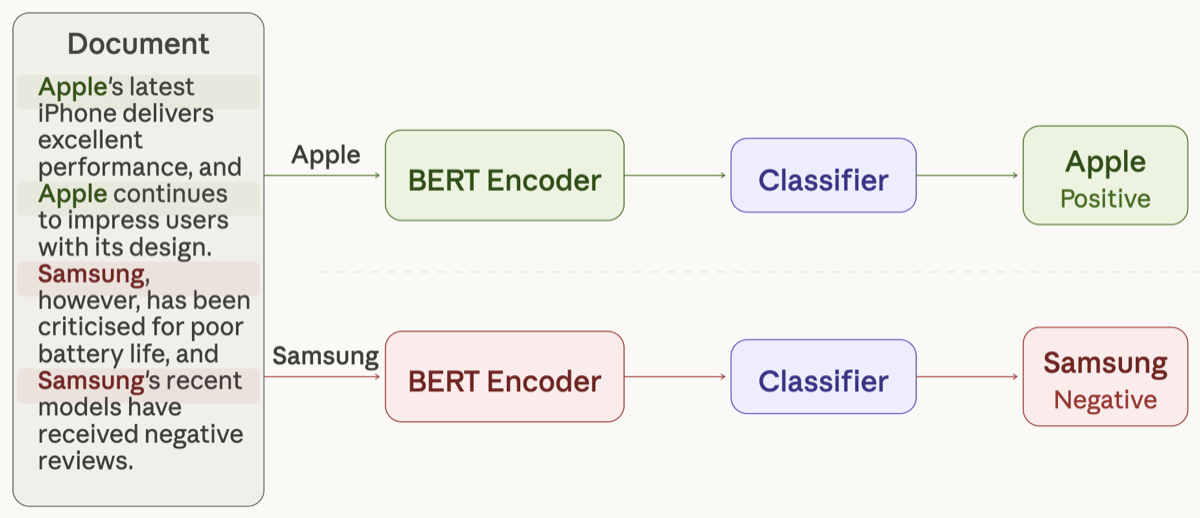

The First Approach (v1): Accurate but Expensive

Our initial system approached ELS as a question-answering problem.

For each entity in a document:

- We locate all mentions of that entity

- Extract a context window around each mention

- Pass that context, along with the entity, into the model

- Predict sentiment (positive, negative, or neutral)

This worked well in terms of accuracy.

But it had a fundamental limitation: it scaled linearly with the number of entities.

A document with 10 entities required 10 separate model runs. At our scale, where documents often contain multiple entities, this quickly became expensive.

The core issue was simple:

We were making the model re-read the same document multiple times.

The Key Insight: Stop Re-Reading the Document

This led us to a simple but important question:

Does the model really need to process the entire document again for every entity?

The answer turned out to be no.

Transformer models build contextual representations for every token in a document during a single forward pass. By the time the model has processed the document once, it already has a rich understanding of:

- What entities are present

- Where they appear

- How they relate to surrounding context

Running the model again for a different entity doesn’t add new information. It just repeats work that has already been done.

That realization pointed directly to a more efficient design.

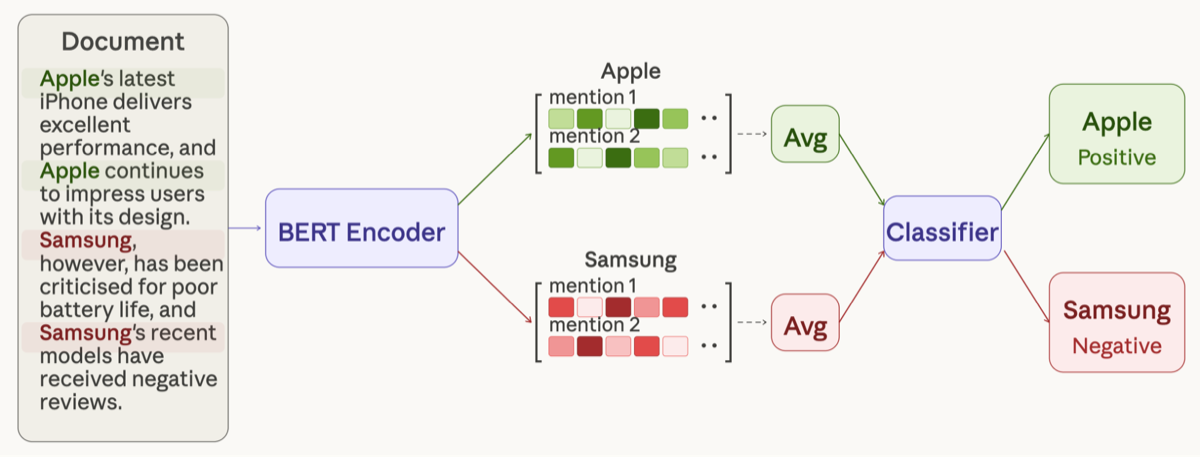

The New Approach (v2): Read Once, Understand Everything

We redesigned the system around a simple principle:

Process the document once, and extract everything you need from that shared understanding.

The updated approach works as follows:

1. Single-pass encoding

We process the document once, allowing the model to build contextual representations for all tokens.

2. Entity-specific extraction

From this shared representation, we extract embeddings corresponding to each entity mention.

3. Aggregation across mentions

If an entity appears multiple times, we combine its mention-level representations (by averaging) into a single representation.

4. Sentiment prediction

This aggregated representation is used to predict sentiment for the entity.

What Changed

- Instead of one model run per entity, we now have one model run per document

- Instead of recomputing context, we reuse it across all entities

This effectively removes the dependency on the number of entities, turning an O(n) process into something much closer to constant-time per document.

The Results

The impact was significant across both cost and performance.

The accuracy gain came from an important side effect: the efficiency improvements allowed us to deploy a larger, more capable model within the same resource constraints.

What Surprised Us

Two things stood out during this transition.

1. Simple aggregation worked better than expected

We initially assumed that averaging mention representations would be a rough approximation. In practice, it proved to be surprisingly robust.

For entities mentioned multiple times across a document, aggregation often produced more stable and reliable representations than the previous approach.

2. Less context was enough

We expected that removing broader surrounding context might hurt performance.

But in practice, focusing on mention-level context was sufficient. The model was still able to capture the necessary signals to make accurate predictions.

This suggests that, for ELS, relevant local context carries most of the signal, even if broader context can still help in edge cases.

What This Means Going Forward

Our initial approach wasn’t wrong. It was a reasonable way to tackle a complex problem.

But it was built on an assumption that didn’t hold:

That the model needed to process each entity independently.

By questioning that assumption, we uncovered a much more efficient approach.

This shift doesn’t just reduce cost. It fundamentally changes what’s possible:

- We can process more data in real time

- Support more entities per document without penalty

- Deploy stronger models within the same infrastructure

And most importantly, it gives us a scalable foundation to build on as we continue to improve Brand Sentiment.

Final Thought

Sometimes the biggest gains don’t come from adding more complexity.

They come from recognizing and eliminating unnecessary work.

In our case, the breakthrough was simple:

Stop making the model read the same document over and over again.