The volume of brand mentions online is exploding. With this explosion is an expectation that the “owners” of these brands will not only participate in the conversation, but take in this feedback and make necessary adjustments to products, ads, distribution, customer service, etc. To do this effectively, Meltwater needs to help company executives go well beyond a simple positive/negative analysis of these brand mentions and provide a clear picture of who is saying what about their brands.

Within the context of an mLabs research project, Balázs Gődény of our NLP team in Budapest built a prototype to help us understand what other insights (or sentiment dimensions) could be uncovered from brand mentions. In this article Balázs discusses the most important findings.

What is sentiment analysis today?

The goal of sentiment analysis is to determine the attitude of the writer with respect to some topic or the overall polarity of a document. In its simplest form the aim is to learn whether the writer is rather for or more against the topic she writes about. Does she like or dislike the subject? Most sentiment extraction tools assign a single numeric score, e.g. in the range from -1 to 1, that represents the author’s attitude or overall evaluation. Meltwater’s current sentiment extraction solution is one of such systems.

In a recent internal research project we tried to investigate the feasibility of going beyond this one dimensional sentiment extraction approach. Can we do better, can we extract more colourful information? Can we do this with a reasonable effort? Our main use case in mind was helping product managers analyse what people say on Twitter about their products, services and brands. We would like to answer questions like: what are the emotions people express, what is the target of their emotions (which feature of the product), what is the context in which they use the product, what is the purchase status of the customers regarding the product (are they using it already, are they intending to buy it)?

In summary it seems that it is possible to answer such questions with an acceptable accuracy and without investing huge resources into developing the solution. This post tries to give some interesting details and learning points of this investigation.

What is beyond one dimensional sentiment?

Naturally we started the project with trying to define what kind of insights we would like to mine from product related tweets and also how we can measure how good we are doing. And naturally, defining these took quite long. This is neither new, nor surprising, but let’s already mark this as a learning point: agreeing on requirements, setting expectations and quality metrics right is a significant work that should not be underestimated when planning a project. Eventually we ended up with a list of sentiment related dimensions that we would like to target.

Instead of giving the strict definition for each of them, I rather illustrate them using the following tweet:

“Backpacking through wonderful Europe or traversing the Pacific Rim, S Translator on GALAXY S4 is the perfect travel mate”.

The dimensions that we are interested in when assessing the sentiment of this tweet are:

- Polarity of sentiment: very positive. The user seems to like it a lot.

- Emotion bearing words: “perfect”.

- Attributes: “wonderful”.

- Topic/Product/Feature: “S Translator”, “GALAXY S4”. These are the targets of the emotion expressed in the text.

- Context/Activity: “Backpacking”, “Europe”, “Traversing”, “Pacific Rim”. These phrases relate to where and how the product is used, consumed, interacted with.

- Pre/Post purchase status: Post purchase. We can be sure from the text that the customer has already purchased the product.

- Question: None

Do humans agree on sentiment?

As to how to measure our quality, first we tried to establish the human agreement level for each of these dimensions. If it turns out that even people can’t agree on the expected output of the algorithm then it is not realistic to expect the machine to do well. Actually even if it did well, we wouldn’t be able to recognize it. So we took 200 tweets on 20 different topics and asked a couple of colleagues to categorize/annotate them, i.e. tell what the sentiment polarity was, what the emotion bearing words were, etc.

“In our tests, even humans only agreed in 69% of the cases on the sentiment of a piece of text.”

When we tried to classify the tweets into the 3 classes positive, neutral and negative, we agreed on the sentiment polarity in 69% of the cases. If we used 5 classes instead, adding also “very positive” and “very negative” to the list of choices, then the agreement dropped to 52%. These seemingly low results set an upper threshold on what we can expect from the machine in the best case. For the emotion bearing words, topics and contexts the human agreement is even lower: 25%, 27% and 40%, respectively.

On the other hand, people seem to agree quite often on the purchase cycle status (65%), on the attributes (60%) and - not surprisingly - on whether the tweet contains a product related question (96%). Note that low agreement scores can mean either that our definitions are bad, fuzzy, under-specified, contradicting, or that the task is very hard to do consistently even for humans. In the sentiment domain the latter is a well known fact, as reported previously by many researchers. And we suspect that to some extent the former is also true, but it is very hard to tell these factors apart.

State of the art

The next phase was to research the state of the art, meaning what others have already achieved in similar tasks. We found commercial tools that do something similar to what we need, and some of these have online demos that we can use to benchmark our solution. There are also an infinite number of scientific publications in the field (see the references at the end of this article).

The main learning points from this research phase were:

Most of the elements of what we need are already done but no product has everything we want. And even the parts that do exist are slightly different from what we need.

Research papers talk about trivial solutions, simple solutions and very complex solutions. Actually they don’t talk about any of these, they talk about math, but these are the things that lie behind the formulas.

- Trivial solutions are used as a baseline, just for the sake of comparison. They take no time to implement and perform poorly.

- Simple solutions take longer to create and typically perform at an acceptable level.

- The complex state of the art solution with lots of effort involved, huge complexity, big machinery, and a performance only a couple of percent better than that of the simple solution.

To me it seems that in a commercial setup the extra effort of bringing a solution from (2) to (3), just to fight for an additional percent or two in accuracy simply isn’t worth it.

Our approach

Considering our requirements (lots of them), time constraints (very tight) and the resources (limited as always) we opted for extending some existing approaches adopted by academia and industry alike. Without giving full details of our solution we ended up with a prototype that is mostly based on ideas found in the SentiStrength project. Like SentiStrength we followed a simple, dictionary-based approach, in which we tried to match the words of a tweet with a static list of emotion bearing words. These words come with an associated polarity value, so during processing we simply sum up the positive and negative scores and decide on the final polarity based on these scores.

Finding topics, activities and attributes is done by executing part of speech tagging and trying to find the relevant nouns or noun phrases, verbs and adjectives, respectively. The keyword here is “relevant”, as this is the most important point of the algorithm and the one that is the most difficult to do correctly. By the way, we don’t do it correctly either. I’m afraid that to be able to tell whether a certain verb refers to our target noun would require sentence parsing which due to our requirements was not available to us in this project. Extraction of the purchase status works by a simple pattern matching approach based on a couple of handcrafted rules.

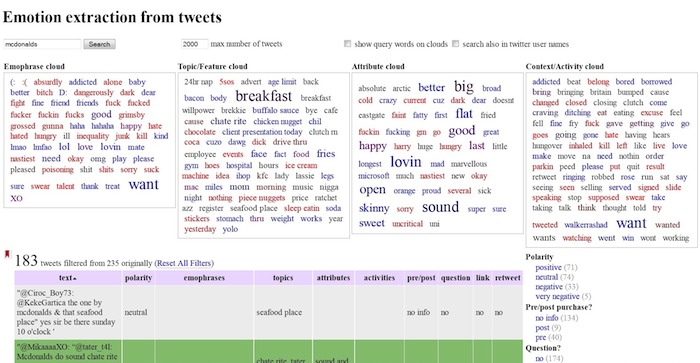

Although our approach was simple and we didn’t experiment with different algorithms for the sake of limited scope, the results are encouraging. The above screenshot of our prototype application shows what a product manager at McDonalds could see when using our application.

The following table shows the accuracy results across all the dimensions, measured on our small test set of 200 tweets.

| Stanford etcML | Bitext | SentiStrength | Our prototype | Human agreement | |

|---|---|---|---|---|---|

| Polarity (3 class) | 0.49 | 0.48 | 0.57 | 0.66 | 0.69 |

| Polarity (5 class) | 0.34 | 0.40 | 0.43 | 0.50 | 0.52 |

| Emotion words | 0.42 | 0.25 | |||

| Topic / Feature | 0.35 | 0.27 | |||

| Context | 0.38 | 0.40 | |||

| Attributes | 0.59 | 0.60 | |||

| Purchase status | 0.52 | 0.65 | |||

| Question | 0.88 | 0.96 |

The numbers are quite close to the human agreement level which is great, assuming no overfitting. For some dimensions our scores are even higher but as we discussed above this unfortunately does not mean that we are better, it only means that even humans disagree to a large extent. On the polarity dimension we could directly compare our performance against some existing tools and - as is the standard procedure in such cases - we can happily announce that we are doing better.

However, we should be careful not to interpret the above results as “we are smarter than the Stanford and Bitext teams”. Their tools are generic classifiers that work well in the average case and were trained on data that was quite different from the data we were using in this project e.g. the Stanford etcML used movie reviews as training data. Our tool, however, is custom tailored to our specific task. Moreover we had access to the test data during fine tuning the algorithm and although we made an effort not to cheat, we may have done some unconscious decisions that benefit our scores.

And finally here are the most important learning points for me after this project.

- If the task is well defined then simple solutions can be fine. If we know exactly what we want and how we evaluate whether we achieved it, then usually there is an easy way to get there, probably by looking up what others have done that can be reused and tuning it to our needs. It implies that it makes sense to invest a lot in defining the requirements and expectations as well as we can in the beginning of every project.

- On the other hand, complex solutions are rarely justified. They are usually costly but yield low gain. Go to heavy complexities if you are sure that the additional couple of percents in accuracy matters. Again, you should know how to interpret “good enough” and stop if you are there.

- Custom solutions can be much better than generic off-the-shelf products. The comparison table presented above clearly shows that a solution that is specifically tailored to the task at hand can perform much better than a ready made generic solution. However, there is a risk in doing a heavily customised solution: it may turn out that in real life the algorithm runs in a quite different environment than what was used to develop the algorithm.

- Telling relevant from irrelevant pieces of information is very hard. The best example of this is question extraction: it is trivial to recognize that there is a question in the tweet but telling whether the question is relevant for a product manager (i.e. the question is about a product, service, brand or feature) is hard.

A natural follow up project would be to verify how our approach scales across languages. The fact that we did not use any NLP technology more advanced than part of speech tagging makes us hopeful that this solution can easily be extended to the dozen languages Meltwater supports.

We hope that you got a couple of relevant points out of this article. Please share your thoughts with us in the comments below.